interactive

Jetpack for Learning: Reflecting Pool

The internet is a potentially powerful tool for enhancing learning environments. However, the unstructured nature of it can present a challenge to both students and teachers. For example, students may lack the ability to pursue a learning goal strategically and therefore find themselves overwhelmed by the amount of information at their fingertips and wandering aimlessly. Teachers, on the other hand, may be at a loss of how to assess students' learning in such a setting and unable to understand the connections that students' are making.

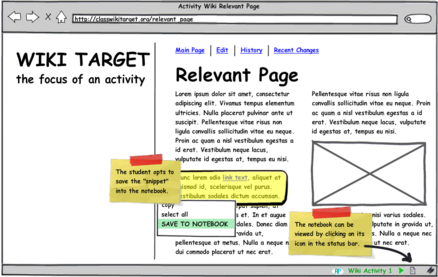

Reflecting Pool Mockup Screenshot

Reflecting Pool Mockup Screenshot

Reflecting Pool (see attached interactive PDF) aims to improve internet-based activities for students by increasing metacognitive awareness (i.e., planning, monitoring, and control of thinking) and for teachers by giving them greater access to the thought processes of their students. Furthermore, it offers a unique opportunity for all to understand and explore the collective knowledge of the class.

- Mike's blog

- Comments

- Read more

- 21672 reads

SMALLab on the Small Screen

The positions of the glowballs, and other information coming from SCREM, can be relayed to a little Nokia N800 palmtop over the WiFi connection.

Tracking is still a little rough, and, as I mention in the video, I think the IR cams are grabbing the N800 a little bit, so we'll need to keep it outside of the area--at least until the tracker is a bit more solid. Still, it should be a handy way to bring in another interface--and other participants--inside SMALLab. In fact, I gave the N800 to James in the office, and he could watch the movements of the balls through the wall.

I'm sure that's useful somehow.

- Mike's blog

- Comments

- Read more

- 5575 reads

3D Buttons and Sliders

Here is a quick video explanation of the HitArea and DragArea render engines.

The engines can track when a pointer enters and leaves them, and the DragArea engine also reports back the pointer's relative position--handy for sliders, etc.

- Mike's blog

- Comments

- 5516 reads

Faking 3D on a Flat Plane

We were experimenting with different ideas for how to represent the third dimension you have access to with the SMALLab installation. This is a trial using a camera viewpoint that tracks the position of the ball as you move through a space with it. It fools you into thinking that a 3d world is changing at your feet.

It's not a perfect solution. It only works for one person, and there are limits to how high you can pretend the Z-axis goes. Still, it's an interesting way of looking at the problem, and it might open more doors later on.

- Mike's blog

- Comments

- Read more

- 5788 reads

Made Make!

The Pleech Instructable appeared on the MAKE: Blog today.

This is great for the project. One of the points I drove home during my presentation was that I wanted the Pleech to be as much about the process of building one and sharing improvements with people as it is the end product. Hopefully wider public exposure will help bring more people into this process and start a good debate on the best way to build things like this.

- Mike's blog

- Comments

- 7697 reads

The Process for the Product

It is finished:

Pringles Wind Turbine (Pleech) - Version One

Well, actually, it's only just now getting started, really. The instructable is part of a hopefully ongoing process for refining the Pleech concept by harnessing the power of the instructables community. It's only been up for a few hours and already has a fan! Woowee!

- Mike's blog

- Comments

- 5498 reads

Photos From Yesterday

Just some more data for you from yesterday's events. Here's me at the end of the table studiously assembling my MintyBoost:

Image licensed under the CC-BY-NC-ND. Thanks to pt for the image.

And here's me with a completed ShakeLight:

- Mike's blog

- Comments

- 5361 reads

Me With Human Power DIY Flashlight

This is the human-power flashlight I built with a partner, who had never soldered before and did a great job, at the Bent Festival at Eyebeam.

- Comments

- 17309 reads

tags

Copyright Mike Edwards 2006-2009. All content available under the Creative Commons Attribution ShareAlike license, unless otherwise noted.